A product portfolio built around efficient AI compute

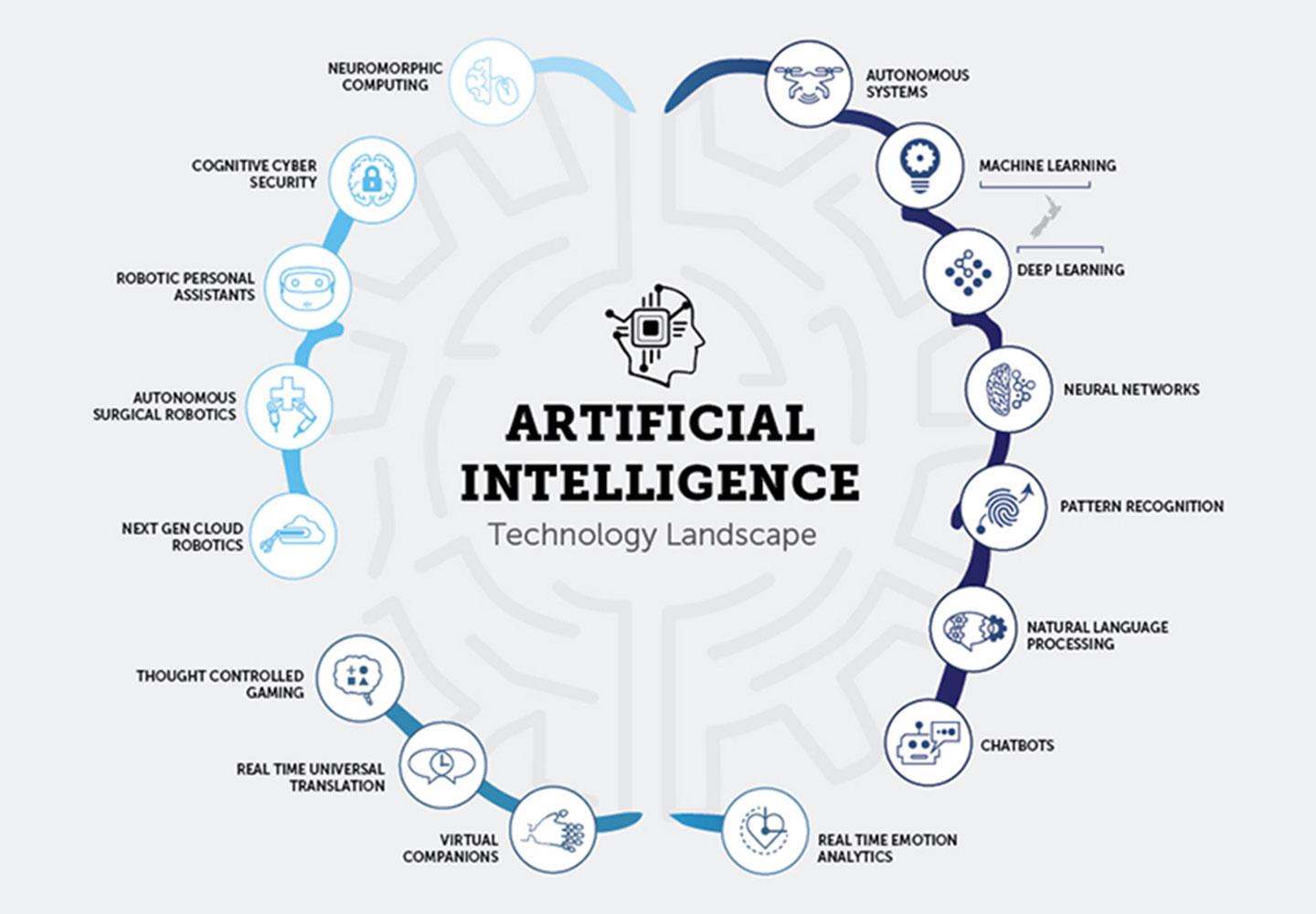

ACE3's portfolio spans inference acceleration, LLM optimisation, cloud infrastructure, diffusion performance, and AI application development workflows.

ACE3's portfolio spans inference acceleration, LLM optimisation, cloud infrastructure, diffusion performance, and AI application development workflows.

These products focus on production inference, where performance and cost to serve are most exposed. ACE3Suite is the general-purpose inference toolkit, while ACE3LLM is specialised for large language model deployment.

The wider portfolio extends ACE3 into cloud infrastructure, specialised diffusion acceleration, and AI development lifecycle tooling, giving the company multiple entry points into the AI stack.

PyTorch

TensorFlow

JAX

ONNX

Speak with the team if you are evaluating product fit, strategic partnerships, or the company's broader position in AI infrastructure.